YouTube is to stop recommending videos to teenagers that idealise specific fitness levels, body weights or physical features, after experts warned such content could be harmful if viewed repeatedly.

The platform will still allow 13- to 17-year-olds to view the videos, but its algorithms will not push young users down related content “rabbit holes” afterwards.

YouTube said such content did not breach its guidelines but that repeated viewing of it could affect the wellbeing of some users.

YouTube’s global head of health, Dr Garth Graham, said: “As a teen is developing thoughts about who they are and their own standards for themselves, repeated consumption of content featuring idealised standards that starts to shape an unrealistic internal standard could lead some to form negative beliefs about themselves.”

YouTube said experts on its youth and families advisory committee had said that certain categories that may be “innocuous” as a single video could be “problematic” if viewed repeatedly.

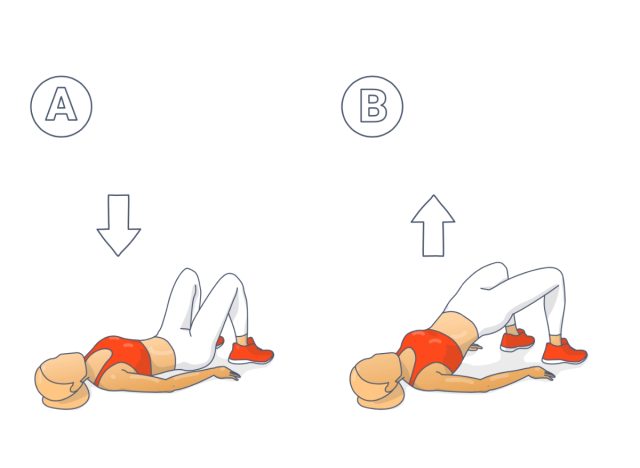

The new guidelines, now introduced in the UK and around the world, apply to content that: idealises some physical features over others, such as beauty routines to make your nose look slimmer; idealises fitness or body weights, such as exercise routines that encourage pursuing a certain look; or encourages social aggression, such as physical intimidation.

YouTube will no longer make repeated recommendations of those topics to teenagers who have registered their age with the platform as logged-in users. The safety framework has already been introduced in the US.

“A higher frequency of content that idealises unhealthy standards or behaviours can emphasise potentially problematic messages – and those messages can impact how some teens see themselves,” said Allison Briscoe-Smith, a clinician and YouTube adviser. “‘Guardrails’ can help teens maintain healthy patterns as they naturally compare themselves to others and size up how they want to show up in the world.”

In the UK, the newly introduced Online Safety Act requires tech companies to protect children from harmful content, as well as considering how their algorithms may expose under-18s to damaging material. The act refers to algorithms’ ability to cause harm by pushing large amounts of content to a child over a short space of time, and requires tech companies to assess any risk such algorithms could pose to children.

after newsletter promotion

Sonia Livingstone, a professor of social psychology at the London School of Economics, said a recent report by the Children’s Society charity underlined the importance of tackling social media’s impact on self-esteem. A survey in the Good Childhood report showed that nearly one in four girls in the UK were dissatisfied with their appearance.

“There is at least a recognition here that changing algorithms is a positive action that platforms like YouTube can take,” Livingstone said. “This will be particularly beneficial for young people with vulnerabilities and mental health problems.”